Adaptive distances

In this notebook, we illustrate the use of adaptive distances implemented in pyABC. “Adaptive” means that the distance function is not pre-defined (e.g. after pre-processing), but adjusts over time during the ABC run. Here, we provide adaptation for data scale normalization and outliers. General adaptation concepts can be easily implemented via the Distance class’ initialize() and update() methods. Adaptive distances are implemented as extensions of the PNormDistance.

It uses a distance between simulations \(s\) and observed data \(s_\text{obs}\) (or summary statistics representations thereof)

with weights \(w\) and exponent \(p\geq 1\). E.g., \(p=2\) gives a weighted Euclidean distance, \(p=1\) a Manhattan distance, \(p=\inf\) a maximum distance.

The notebook can be downloaded here.

Scale normalization

The AdaptivePNormDistance offers methods to, in each generation (or, if desired, only in selected generations), recalculate the weights in order to rescale the impact of each coordinate. This can be useful if different summary statistics vary on different scales, in which case statistics with higher sample variance would dominate the distance value and thus the analysis, while proper rescaling ensures that each statistic has a roughly similar impact.

The concept is based on this seminal work by Dennis Prangle 2017:

Dennis Prangle.

Adapting the ABC distance function.

Bayesian Analysis 12.1 (2017): 289-309.

For illustration, we consider a simple Gaussian model:

[ ]:

# install if not done yet

!pip install pyabc --quiet

[1]:

import logging

import tempfile

import matplotlib.pyplot as pyplot

import numpy as np

import pyabc

[2]:

pyabc.settings.set_figure_params("pyabc") # for beautified plots

# for debugging

df_logger = logging.getLogger("ABC.Distance")

df_logger.setLevel(logging.DEBUG)

# model definition

def model(p):

return {

"s1": p["theta"] + 1 + 0.1 * np.random.normal(),

"s2": 2 + 10 * np.random.normal(),

}

# true model parameter

theta_true = 3

# observed summary statistics

observation = {"s1": theta_true + 1, "s2": 2}

# prior distribution

prior = pyabc.Distribution(theta=pyabc.RV("uniform", 0, 10))

# database

db_path = pyabc.create_sqlite_db_id(file_="adaptive_distance.db")

def plot_history(history):

"""Plot 1d posteriors over time."""

fig, ax = pyplot.subplots()

for t in range(history.max_t + 1):

df, w = history.get_distribution(m=0, t=t)

pyabc.visualization.plot_kde_1d(

df,

w,

xmin=0,

xmax=10,

x="theta",

ax=ax,

label=f"PDF t={t}",

refval={"theta": theta_true},

refval_color="grey",

)

ax.legend()

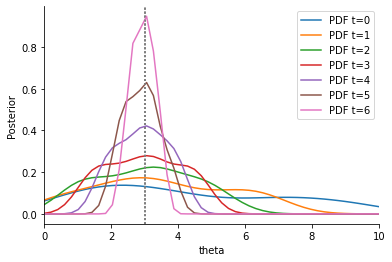

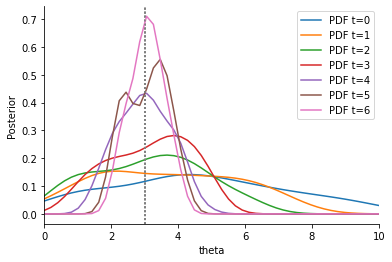

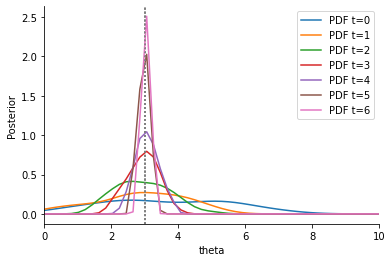

Summary statistic s2 has a high variance compared to summary statistic s1. In addition, s1 is informative about the model parameters \(\theta\), s2 is not. We expect that the proposal distribution for \(\theta\) iteratively centers around the true value \(\theta=3\). Thus, the variability for the sampled s1 decreases iteratively, while the variability of the sampled s2 stays approximately constant. If both summary statistics are weighted similarly in the calculation of the distance between sample and observation, there is hence an undesirable high impact of s2, so that convergence can be slowed down. In contrast, if we weight s1 higher, we may hope that our estimation of \(\theta\) is improved.

These informal expectations being stated, let us continue with the implementation. First, we consider a non-adaptive Euclidean distance with uniform weights (i.e. \(w\equiv 1\)):

[3]:

distance = pyabc.PNormDistance(p=2)

abc = pyabc.ABCSMC(model, prior, distance)

abc.new(db_path, observation)

h_uni = abc.run(max_nr_populations=7)

plot_history(h_uni)

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=1, start_time=2022-03-25 17:56:05>

ABC INFO: Calibration sample t = -1.

ABC INFO: t: 0, eps: 7.03999965e+00.

ABC INFO: Accepted: 100 / 220 = 4.5455e-01, ESS: 1.0000e+02.

ABC INFO: t: 1, eps: 4.51503638e+00.

ABC INFO: Accepted: 100 / 425 = 2.3529e-01, ESS: 9.4379e+01.

ABC INFO: t: 2, eps: 3.06944258e+00.

ABC INFO: Accepted: 100 / 613 = 1.6313e-01, ESS: 9.6825e+01.

ABC INFO: t: 3, eps: 2.06373619e+00.

ABC INFO: Accepted: 100 / 926 = 1.0799e-01, ESS: 9.8871e+01.

ABC INFO: t: 4, eps: 1.59843112e+00.

ABC INFO: Accepted: 100 / 1282 = 7.8003e-02, ESS: 9.9531e+01.

ABC INFO: t: 5, eps: 1.03245438e+00.

ABC INFO: Accepted: 100 / 1856 = 5.3879e-02, ESS: 9.8850e+01.

ABC INFO: t: 6, eps: 7.35771496e-01.

ABC INFO: Accepted: 100 / 3165 = 3.1596e-02, ESS: 9.7844e+01.

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=1, duration=0:00:06.476983, end_time=2022-03-25 17:56:12>

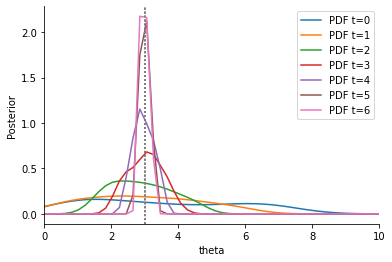

Second, we consider an adaptive Euclidean distance:

[4]:

scale_log_file = tempfile.mkstemp(suffix=".json")[1]

distance_adaptive = pyabc.AdaptivePNormDistance(

p=2,

scale_function=pyabc.distance.mad, # method by which to scale

scale_log_file=scale_log_file,

)

abc = pyabc.ABCSMC(model, prior, distance_adaptive)

abc.new(db_path, observation)

h_ada = abc.run(max_nr_populations=7)

plot_history(h_ada)

ABC.Distance DEBUG: Fit scale ixs: <EventIxs, ts={inf}>

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=2, start_time=2022-03-25 17:56:12>

ABC INFO: Calibration sample t = -1.

ABC.Distance DEBUG: Scale weights[0] = {'s1': 3.5668e-01, 's2': 1.3377e-01}

ABC.Population INFO: Recording also rejected particles: True

ABC INFO: t: 0, eps: 1.72412322e+00.

ABC INFO: Accepted: 100 / 170 = 5.8824e-01, ESS: 1.0000e+02.

ABC.Distance DEBUG: Scale weights[1] = {'s1': 3.5670e-01, 's2': 1.6313e-01}

ABC INFO: t: 1, eps: 1.18844229e+00.

ABC INFO: Accepted: 100 / 266 = 3.7594e-01, ESS: 9.7254e+01.

ABC.Distance DEBUG: Scale weights[2] = {'s1': 5.6878e-01, 's2': 1.5639e-01}

ABC INFO: t: 2, eps: 1.01577127e+00.

ABC INFO: Accepted: 100 / 406 = 2.4631e-01, ESS: 9.9213e+01.

ABC.Distance DEBUG: Scale weights[3] = {'s1': 7.9713e-01, 's2': 1.4391e-01}

ABC INFO: t: 3, eps: 8.16917955e-01.

ABC INFO: Accepted: 100 / 430 = 2.3256e-01, ESS: 9.8489e+01.

ABC.Distance DEBUG: Scale weights[4] = {'s1': 1.3874e+00, 's2': 1.4831e-01}

ABC INFO: t: 4, eps: 7.41906064e-01.

ABC INFO: Accepted: 100 / 488 = 2.0492e-01, ESS: 9.8404e+01.

ABC.Distance DEBUG: Scale weights[5] = {'s1': 2.4945e+00, 's2': 1.4836e-01}

ABC INFO: t: 5, eps: 6.54733733e-01.

ABC INFO: Accepted: 100 / 775 = 1.2903e-01, ESS: 9.8508e+01.

ABC.Distance DEBUG: Scale weights[6] = {'s1': 3.7143e+00, 's2': 1.5201e-01}

ABC INFO: t: 6, eps: 6.09213308e-01.

ABC INFO: Accepted: 100 / 736 = 1.3587e-01, ESS: 9.1398e+01.

ABC.Distance DEBUG: Scale weights[7] = {'s1': 7.6311e+00, 's2': 1.4161e-01}

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=2, duration=0:00:04.290164, end_time=2022-03-25 17:56:16>

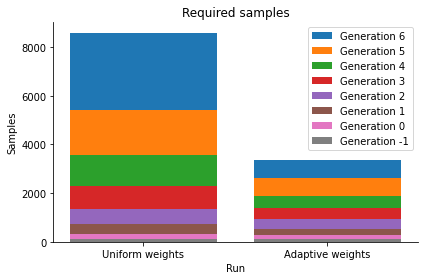

In the debug output of abc.run above, it can be seen how the weights evolve over time. In the posterior plot, we observe how, compared to the non-adaptive distance, the densitities tend to be narrower around the true parameter \(\theta=3\). In addition, despite the better convergence, the required number of samples in total is lower, as can be seen from the below sample numbers plot, as not so much time was wasted trying to match an uninformative summary statistic.

[5]:

histories = [h_uni, h_ada]

labels = ["Uniform weights", "Adaptive weights"]

pyabc.visualization.plot_sample_numbers(histories, labels)

[5]:

<AxesSubplot:title={'center':'Required samples'}, xlabel='Run', ylabel='Samples'>

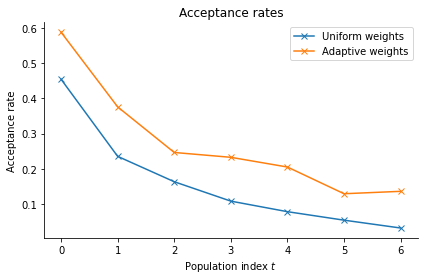

Indeed, the acceptance rates for the adaptive distance function are consistently higher:

[6]:

pyabc.visualization.plot_acceptance_rates_trajectory(histories, labels)

[6]:

<AxesSubplot:title={'center':'Acceptance rates'}, xlabel='Population index $t$', ylabel='Acceptance rate'>

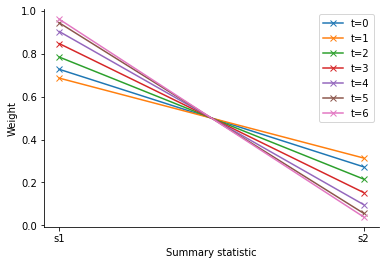

The log file stores the weights of each generation, allowing for further analysis. We can see that indeed ss1 is assigned a higher weight than ss2, the ratio increasing over time.

[7]:

ts = list(range(h_ada.max_t + 1))

labels = [f"t={t}" for t in ts]

pyabc.visualization.plot_distance_weights(

log_files=scale_log_file,

ts=ts,

labels=labels,

)

[7]:

<AxesSubplot:xlabel='Summary statistic', ylabel='Weight'>

In detail, the adaptive distance feature works as follows: In each iteration of the ABCSMC run, after having obtained the desired number of accepted particles (and once at the beginning using a calibration sample from the prior), the method update() is called. It is given a set of summary statistics which can be used to e.g. compute weights for the distance measure in the next iteration. In order to avoid bias, via configure_sampler(), the distance function tells the sampler to not only

record accepted particles, but all that were generated during the sampling process.

Above, the acceptance step uses in each generation only the distance based on the current weights. Sometimes it may be preferable to have nested acceptance regions, by also checking all previous acceptance criteria. This can be realized by passing a acceptor=pyabc.UniformAcceptor(use_complete_history=True) to the ABCSMC class.

For scale normalization, we used as a measure of sample variability above the median absolute deviation (MAD), a robust alternative to the standard deviation. Other measures are possible and implemented in pyabc.distance.scale.

Robustify against model error

One problem not addressed by the previous adaptive weighting scheme is that if there are outliers in the data, things can go wrong. We informally denote by an outlier a data point corrupted by large errors that cannot be expected under the given experimental setup, already accounting for measurement noise. To circumvent this, robust methods have been developed that are less sensitive to outliers and can even detect and down-weight such points. The methods presented in the following are based on this work:

Yannik Schaelte, Emad Alamoudi, Jan Hasenauer.

Robust adaptive distance functions for approximate Bayesian inference on outlier-corrupted data.

bioRxiv 2021.07.29.454327; doi: https://doi.org/10.1101/2021.07.29.454327.

As an example, consider the previous model, but with an additional summary statistic of small variance, whose observation however is an outlier, i.e. a deviant value that cannot be explained under the model. This is a scenario that can typically occur in data where some coordinates hardly depend on the inputs, some may however be subject to errors not accounted for by the model due to e.g. external perturbations, or human errors such as wrong or missing labels. Also other outlier scenarios are possible, for a more extensive discussion see the publication.

[8]:

import logging

import os

import tempfile

import matplotlib.pyplot as pyplot

import numpy as np

import pyabc.visualization

# for debugging

df_logger = logging.getLogger("Distance")

df_logger.setLevel(logging.DEBUG)

# model definition

def model(p):

return {

"s1": p["theta"] + 1 + 0.1 * np.random.normal(),

"s2": 2 + 10 * np.random.normal(),

"s3": 2 + 0.1 * np.random.normal(),

}

# true model parameter

theta_true = 3

# observed summary statistics

observation = {"s1": theta_true + 1, "s2": 2, "s3": 5}

# prior distribution

prior = pyabc.Distribution(theta=pyabc.RV("uniform", 0, 10))

# database

db_path = pyabc.create_sqlite_db_id(file_="adaptive_distance.db")

def plot_history(history):

"""Plot 1d posteriors over time."""

fig, ax = pyplot.subplots()

for t in range(history.max_t + 1):

df, w = history.get_distribution(m=0, t=t)

pyabc.visualization.plot_kde_1d(

df,

w,

xmin=0,

xmax=10,

x="theta",

ax=ax,

label=f"PDF t={t}",

refval={"theta": theta_true},

refval_color="grey",

)

ax.legend()

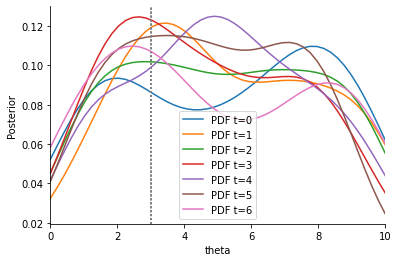

An distance with uniform weights suffers from the same problems as before.

[9]:

distance = pyabc.PNormDistance(p=2)

abc = pyabc.ABCSMC(model, prior, distance)

abc.new(db_path, observation)

h_uni = abc.run(max_nr_populations=7)

plot_history(h_uni)

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=3, start_time=2022-03-25 17:56:22>

ABC INFO: Calibration sample t = -1.

ABC INFO: t: 0, eps: 8.83875673e+00.

ABC INFO: Accepted: 100 / 176 = 5.6818e-01, ESS: 1.0000e+02.

ABC INFO: t: 1, eps: 5.92014462e+00.

ABC INFO: Accepted: 100 / 292 = 3.4247e-01, ESS: 9.4200e+01.

ABC INFO: t: 2, eps: 4.52756560e+00.

ABC INFO: Accepted: 100 / 537 = 1.8622e-01, ESS: 9.7015e+01.

ABC INFO: t: 3, eps: 3.79064700e+00.

ABC INFO: Accepted: 100 / 872 = 1.1468e-01, ESS: 9.7878e+01.

ABC INFO: t: 4, eps: 3.46070308e+00.

ABC INFO: Accepted: 100 / 1222 = 8.1833e-02, ESS: 9.8035e+01.

ABC INFO: t: 5, eps: 3.20079151e+00.

ABC INFO: Accepted: 100 / 1404 = 7.1225e-02, ESS: 9.7536e+01.

ABC INFO: t: 6, eps: 3.08406668e+00.

ABC INFO: Accepted: 100 / 3623 = 2.7601e-02, ESS: 9.6037e+01.

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=3, duration=0:00:07.100998, end_time=2022-03-25 17:56:29>

Next, we consider again a Euclidean distance with adaptive weights.

[10]:

distance_adaptive = pyabc.AdaptivePNormDistance(

p=2,

scale_function=pyabc.distance.mad,

)

abc = pyabc.ABCSMC(

model,

prior,

distance_adaptive,

acceptor=pyabc.UniformAcceptor(use_complete_history=True),

)

abc.new(db_path, observation)

h_ada = abc.run(max_nr_populations=7)

plot_history(h_ada)

ABC.Distance DEBUG: Fit scale ixs: <EventIxs, ts={inf}>

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=4, start_time=2022-03-25 17:56:30>

ABC INFO: Calibration sample t = -1.

ABC.Distance DEBUG: Scale weights[0] = {'s1': 4.0250e-01, 's2': 1.4861e-01, 's3': 1.4870e+01}

ABC.Population INFO: Recording also rejected particles: True

ABC INFO: t: 0, eps: 4.47989200e+01.

ABC INFO: Accepted: 100 / 198 = 5.0505e-01, ESS: 1.0000e+02.

ABC.Distance DEBUG: Scale weights[1] = {'s1': 3.6312e-01, 's2': 1.5042e-01, 's3': 1.4881e+01}

ABC INFO: t: 1, eps: 4.38143932e+01.

ABC INFO: Accepted: 100 / 344 = 2.9070e-01, ESS: 9.7743e+01.

ABC.Distance DEBUG: Scale weights[2] = {'s1': 4.3046e-01, 's2': 1.4578e-01, 's3': 1.5332e+01}

ABC INFO: t: 2, eps: 4.44628941e+01.

ABC INFO: Accepted: 100 / 557 = 1.7953e-01, ESS: 9.5587e+01.

ABC.Distance DEBUG: Scale weights[3] = {'s1': 4.5866e-01, 's2': 1.4819e-01, 's3': 1.4176e+01}

ABC INFO: t: 3, eps: 4.04222910e+01.

ABC INFO: Accepted: 100 / 1450 = 6.8966e-02, ESS: 9.7915e+01.

ABC.Distance DEBUG: Scale weights[4] = {'s1': 4.3275e-01, 's2': 1.4706e-01, 's3': 1.3978e+01}

ABC INFO: t: 4, eps: 3.94279313e+01.

ABC INFO: Accepted: 100 / 2733 = 3.6590e-02, ESS: 9.3574e+01.

ABC.Distance DEBUG: Scale weights[5] = {'s1': 4.6633e-01, 's2': 1.5571e-01, 's3': 1.5107e+01}

ABC INFO: t: 5, eps: 4.20907747e+01.

ABC INFO: Accepted: 100 / 6682 = 1.4966e-02, ESS: 9.6457e+01.

ABC.Distance DEBUG: Scale weights[6] = {'s1': 4.9762e-01, 's2': 1.5082e-01, 's3': 1.5126e+01}

ABC INFO: t: 6, eps: 4.17007190e+01.

ABC INFO: Accepted: 100 / 16089 = 6.2154e-03, ESS: 8.6932e+01.

ABC.Distance DEBUG: Scale weights[7] = {'s1': 4.6989e-01, 's2': 1.4659e-01, 's3': 1.4655e+01}

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=4, duration=0:00:16.092430, end_time=2022-03-25 17:56:46>

These results are as expected: The adaptive weights make the situation much worse, as the impact of the low-variance outlier statistic is increased. Our solution is to firstly use outlier-robust metrics such as a Manhattan distance (p=1), instead of the Euclidean distance used above, which emphasizes large errors.

[11]:

scale_log_file_l1 = tempfile.mkstemp(suffix=".json")[1]

distance_adaptive = pyabc.AdaptivePNormDistance(

p=1, # new, previously p=2

scale_function=pyabc.distance.mad,

scale_log_file=scale_log_file_l1,

)

abc = pyabc.ABCSMC(

model,

prior,

distance_adaptive,

acceptor=pyabc.UniformAcceptor(use_complete_history=True),

)

abc.new(db_path, observation)

h_l1 = abc.run(max_nr_populations=7)

plot_history(h_l1)

ABC.Distance DEBUG: Fit scale ixs: <EventIxs, ts={inf}>

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=5, start_time=2022-03-25 17:56:46>

ABC INFO: Calibration sample t = -1.

ABC.Distance DEBUG: Scale weights[0] = {'s1': 3.7101e-01, 's2': 1.7356e-01, 's3': 1.2818e+01}

ABC.Population INFO: Recording also rejected particles: True

ABC INFO: t: 0, eps: 4.06814929e+01.

ABC INFO: Accepted: 100 / 210 = 4.7619e-01, ESS: 1.0000e+02.

ABC.Distance DEBUG: Scale weights[1] = {'s1': 3.6031e-01, 's2': 1.5561e-01, 's3': 1.4466e+01}

ABC INFO: t: 1, eps: 4.41407080e+01.

ABC INFO: Accepted: 100 / 448 = 2.2321e-01, ESS: 8.7540e+01.

ABC.Distance DEBUG: Scale weights[2] = {'s1': 4.3109e-01, 's2': 1.4271e-01, 's3': 1.4640e+01}

ABC INFO: t: 2, eps: 4.42378486e+01.

ABC INFO: Accepted: 100 / 648 = 1.5432e-01, ESS: 9.5623e+01.

ABC.Distance DEBUG: Scale weights[3] = {'s1': 4.9523e-01, 's2': 1.5261e-01, 's3': 1.4854e+01}

ABC INFO: t: 3, eps: 4.42414461e+01.

ABC INFO: Accepted: 100 / 923 = 1.0834e-01, ESS: 9.1566e+01.

ABC.Distance DEBUG: Scale weights[4] = {'s1': 7.5066e-01, 's2': 1.5755e-01, 's3': 1.5398e+01}

ABC INFO: t: 4, eps: 4.53395178e+01.

ABC INFO: Accepted: 100 / 1773 = 5.6402e-02, ESS: 9.4182e+01.

ABC.Distance DEBUG: Scale weights[5] = {'s1': 8.9670e-01, 's2': 1.4815e-01, 's3': 1.5066e+01}

ABC INFO: t: 5, eps: 4.39434732e+01.

ABC INFO: Accepted: 100 / 2823 = 3.5423e-02, ESS: 7.6064e+01.

ABC.Distance DEBUG: Scale weights[6] = {'s1': 1.3076e+00, 's2': 1.5372e-01, 's3': 1.4739e+01}

ABC INFO: t: 6, eps: 4.28698245e+01.

ABC INFO: Accepted: 100 / 5050 = 1.9802e-02, ESS: 9.2703e+01.

ABC.Distance DEBUG: Scale weights[7] = {'s1': 1.2742e+00, 's2': 1.4557e-01, 's3': 1.4966e+01}

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=5, duration=0:00:08.005392, end_time=2022-03-25 17:56:54>

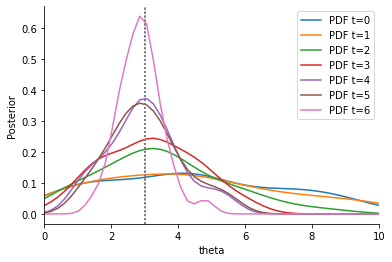

This visibly improves the estimates again. However, the outlier statistic still seems to have a considerable impact, as the estimates are considerably worse than without s3. This can be tackled by actively identifying and down-weighting outlier data points, by using a different weighting scheme than MAD. We propose to use the PCMAD (Perhaps-Combined MAD), which either uses MAD, or, if the fraction of severe outliers detected is not too high (precisely not more than 1/3, see the publication and the API for details), uses MAD + MADO, where MADO is the median absolute deviation to the observed value.

[12]:

scale_log_file_pcmad = tempfile.mkstemp(suffix=".json")[1]

distance_adaptive = pyabc.AdaptivePNormDistance(

p=1,

scale_function=pyabc.distance.pcmad, # new, previously mad

scale_log_file=scale_log_file_pcmad,

)

abc = pyabc.ABCSMC(

model,

prior,

distance_adaptive,

acceptor=pyabc.UniformAcceptor(use_complete_history=True),

)

abc.new(db_path, observation)

h_pcmad = abc.run(max_nr_populations=7)

plot_history(h_pcmad)

ABC.Distance DEBUG: Fit scale ixs: <EventIxs, ts={inf}>

ABC.Sampler INFO: Parallelize sampling on 4 processes.

ABC.History INFO: Start <ABCSMC id=6, start_time=2022-03-25 17:56:55>

ABC INFO: Calibration sample t = -1.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[0] = {'s1': 2.0023e-01, 's2': 6.4406e-02, 's3': 3.2667e-01}

ABC.Population INFO: Recording also rejected particles: True

ABC INFO: t: 0, eps: 2.04345057e+00.

ABC INFO: Accepted: 100 / 214 = 4.6729e-01, ESS: 1.0000e+02.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[1] = {'s1': 2.0080e-01, 's2': 6.7454e-02, 's3': 3.2413e-01}

ABC INFO: t: 1, eps: 1.64061092e+00.

ABC INFO: Accepted: 100 / 270 = 3.7037e-01, ESS: 9.1801e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[2] = {'s1': 3.3611e-01, 's2': 7.4539e-02, 's3': 3.2369e-01}

ABC INFO: t: 2, eps: 1.63893421e+00.

ABC INFO: Accepted: 100 / 348 = 2.8736e-01, ESS: 9.6167e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[3] = {'s1': 5.3300e-01, 's2': 7.4961e-02, 's3': 3.2599e-01}

ABC INFO: t: 3, eps: 1.54996332e+00.

ABC INFO: Accepted: 100 / 394 = 2.5381e-01, ESS: 9.8993e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[4] = {'s1': 7.4951e-01, 's2': 7.9142e-02, 's3': 3.2542e-01}

ABC INFO: t: 4, eps: 1.48381284e+00.

ABC INFO: Accepted: 100 / 482 = 2.0747e-01, ESS: 8.8783e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[5] = {'s1': 1.3840e+00, 's2': 7.5257e-02, 's3': 3.2620e-01}

ABC INFO: t: 5, eps: 1.43561651e+00.

ABC INFO: Accepted: 100 / 733 = 1.3643e-01, ESS: 9.7654e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[6] = {'s1': 1.8054e+00, 's2': 7.5495e-02, 's3': 3.2604e-01}

ABC INFO: t: 6, eps: 1.33302716e+00.

ABC INFO: Accepted: 100 / 874 = 1.1442e-01, ESS: 8.7795e+01.

ABC.Distance INFO: Features ['s3'] (ixs=[2]) have a high bias.

ABC.Distance DEBUG: Scale weights[7] = {'s1': 3.3984e+00, 's2': 7.4986e-02, 's3': 3.2583e-01}

ABC INFO: Stop: Maximum number of generations.

ABC.History INFO: Done <ABCSMC id=6, duration=0:00:04.394688, end_time=2022-03-25 17:56:59>

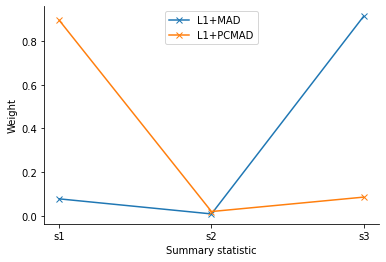

This visibly improves the obtained estimates further. This is due to the respective weights assigned: PCMAD correctly identifies the outlier and assigns a low weight.

[13]:

pyabc.visualization.plot_distance_weights(

[scale_log_file_l1, scale_log_file_pcmad],

labels=["L1+MAD", "L1+PCMAD"],

)

[13]:

<AxesSubplot:xlabel='Summary statistic', ylabel='Weight'>

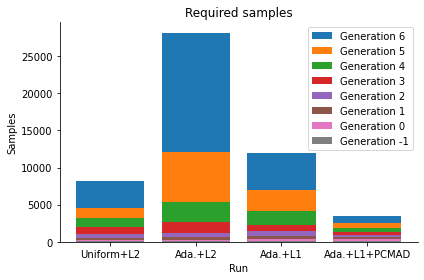

This result is further obtained with less samples.

[14]:

pyabc.visualization.plot_sample_numbers(

[h_uni, h_ada, h_l1, h_pcmad],

["Uniform+L2", "Ada.+L2", "Ada.+L1", "Ada.+L1+PCMAD"],

)

[14]:

<AxesSubplot:title={'center':'Required samples'}, xlabel='Run', ylabel='Samples'>

Conclusion

In general, we recommend the consistent use of an L1 norm over L2, unless squared residuals are needed. This makes the analysis robust to outliers, and further has also shown advantageous on high-dimensional data, where highly deviant points are likely to exist. Further, PCMAD has proven stable and advantageous on multiple test problems, on both outlier-free and outlier-corrupted data. On highly flexible models, it may be unable to reliably detect errors, such that it resorts to MAD. In the implementation in pyABC, PCMAD informs about potential outliers early-on via the logging modules.

Problems

The scale-normalized outlier-robust distance functions presented above clearly still have several shortcomings. In particular, they do not account for how “informative” data points are. This can e.g. be problematic if the data contain large quantities of uninformative data points with background noise that would be enlarged by the here-employed scale normalization. Another problem occurs when many data points are informative of one parameter, but only few of another, in which case one could

argue that the impact of the many and the few should be balanced better in order to extract information on both parameters. To some degree, these problems can be tackled by additional fixed_weights, which pyABC allows to define manually, but this may be cumbersome, and it may be preferable to a-priori define a set of similarly informative summary statistics, e.g. using the regression-based summary

statistics by Fearnhead and Prangle, which are also implemented in pyABC.

For an overview of approaches accounting for both scale and informativeness of data, see the notebook on “Informative distances and summary statistics”.